Companies mentioned: NVDA 0.00%↑ , AMD 0.00%↑ , INTC 0.00%↑

At this year’s SuperComputing conference in Denver, NVIDIA pulled the lid off of a new* product they’re bringing to market.

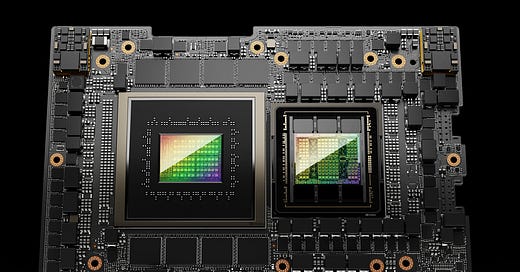

Dubbed the H200, it seeks to address the immediate needs of AI and HPC customers whose workloads hunger for one thing more than nearly anything else: lots of fast memory. H200 is not a revelation in co…